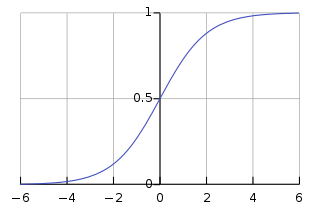

Sigmoid

The sigmoid, defined as

,

is a non-linear function that suffers from saturation.

Saturation of activation

An activation that has an almost zero gradient at certain regions.

This is an undesirable property since it results in slow learning.

image source

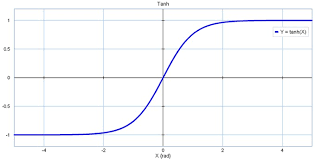

Tanh

This non-linearity squashes a real-valued number to the range

.

Like the sigmoid neuron, its activations saturate, but unlike the sigmoid neuron its output is zero-centered.

image source

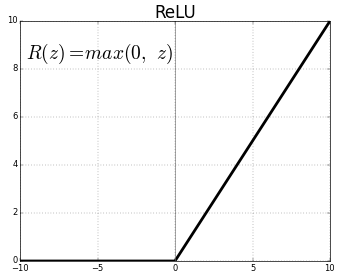

ReLu

The most popular non-linearity in modern deep learning, partly due to its non-saturating nature, defined as

.

image source

Dead filter

A filter which always results in negative values that are mapped by ReLU to zero, no matter what the input is. This causes backpropagation to never update the filter and eventually, due to weight decay, it becomes zero and "dies".

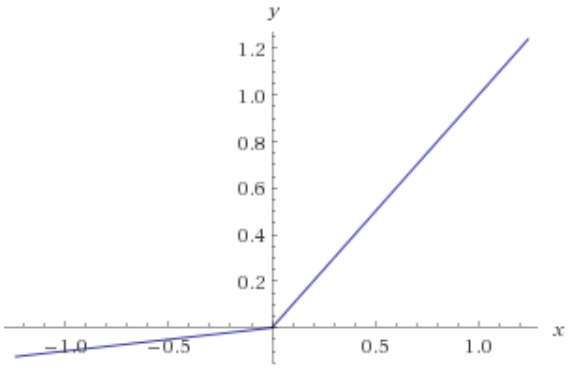

Leaky ReLu

A possible fix to the dead filter problem is to define ReLU with a small slope in the negative part, i.e.,

.

image source